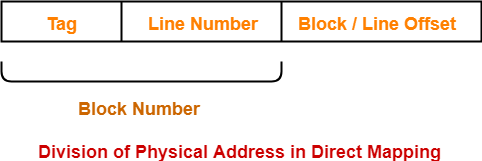

Generally, if a cache line is n bytes long (n is usually some power of two) then that cache line will hold n bytes from main memory that fall on an n-byte boundary. So cache line #0 might correspond to addresses $10000.$1000F and cache line #1 might correspond to addresses $21400.$2140F. The idea of a cache system is that we can attach a different (non-contiguous) address to each of the cache lines. Instead, cache organization is usually in blocks of cache lines with each line containing some number of bytes (typically a small number that is a power of two like 16, 32, or 64), see Figure 6.2.įigure 6.2 Possible Organization of an 8 Kilobyte Cache

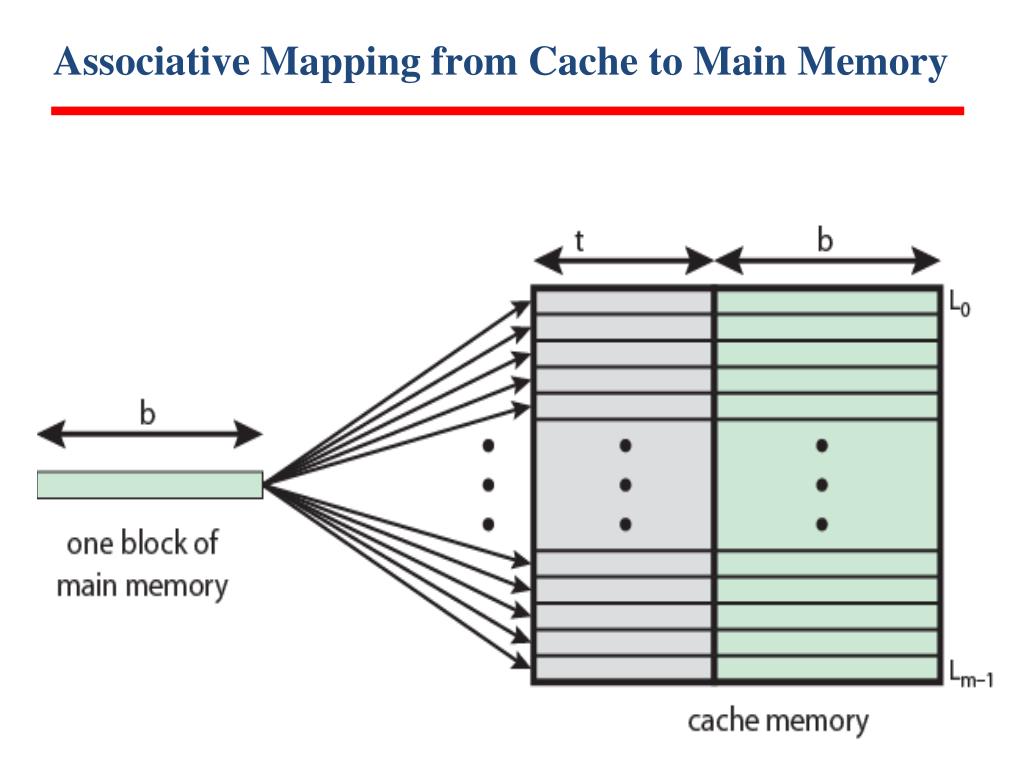

Therefore, the cache design has got to accommodate the fact that it must map data objects at widely varying addresses in memory.Īs noted in the previous section, cache memory is not organized as a group of bytes. In general, the data is spread out all over the address space. Unfortunately, the data rarely sits in contiguous memory locations usually, there's a few bytes here, a few bytes there, and some bytes somewhere else. If the cache is the same size as the typical amount of data the program access at any one given time, then we can put that data into the cache and access most of the data at a very high speed. The basic idea behind a cache is that a program only access a small amount of data at a given time. However, a good question is "how exactly does the cache do this?" Another might be "what happens when the cache is full and the CPU is requesting additional data not in the cache?" In this section, we'll take a look at the internal cache organization and try to answer these questions along with a few others.

Up to this point, cache has been this magical place that automatically stores data when we need it, perhaps fetching new data as the CPU requires it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed